- “Secularism and Religious Accommodation in Modern Algeria: The Case of Abd al-Qadir al-Jazairi” by Jeremy B. E. DeLong. Journal of North African Studies, vol. 21, no. 5, 2016, pp. 758-772.

- “The Return of Abd al-Qadir al-Jazairi to Algeria as a Secular National Symbol” by Noora Lori. Middle Eastern Studies, vol. 55, no. 2, 2019, pp. 207-222.

- “Conceptualizing Islam in Relation to the Secular: The Case of Abdelkader al-Jazairi” by Michaelle Browers. Islam and Christian–Muslim Relations, vol. 20, no. 2, 2009, pp. 129-142.

- “Revisiting the Religious and Secular in Algeria: Abdulhamid Ben Badis and Abdelkader al-Jaza’iri” by Amir Ahmadi. Journal of Islamic Studies, vol. 30, no. 3, 2019, pp. 355-377.

- “Islam and the Secular State: The Emir Abdel Qadir and the French Occupation of Algeria” by Richard M. Eaton. The Journal of Religious History, vol. 14, no. 3, 1988, pp. 308-322.

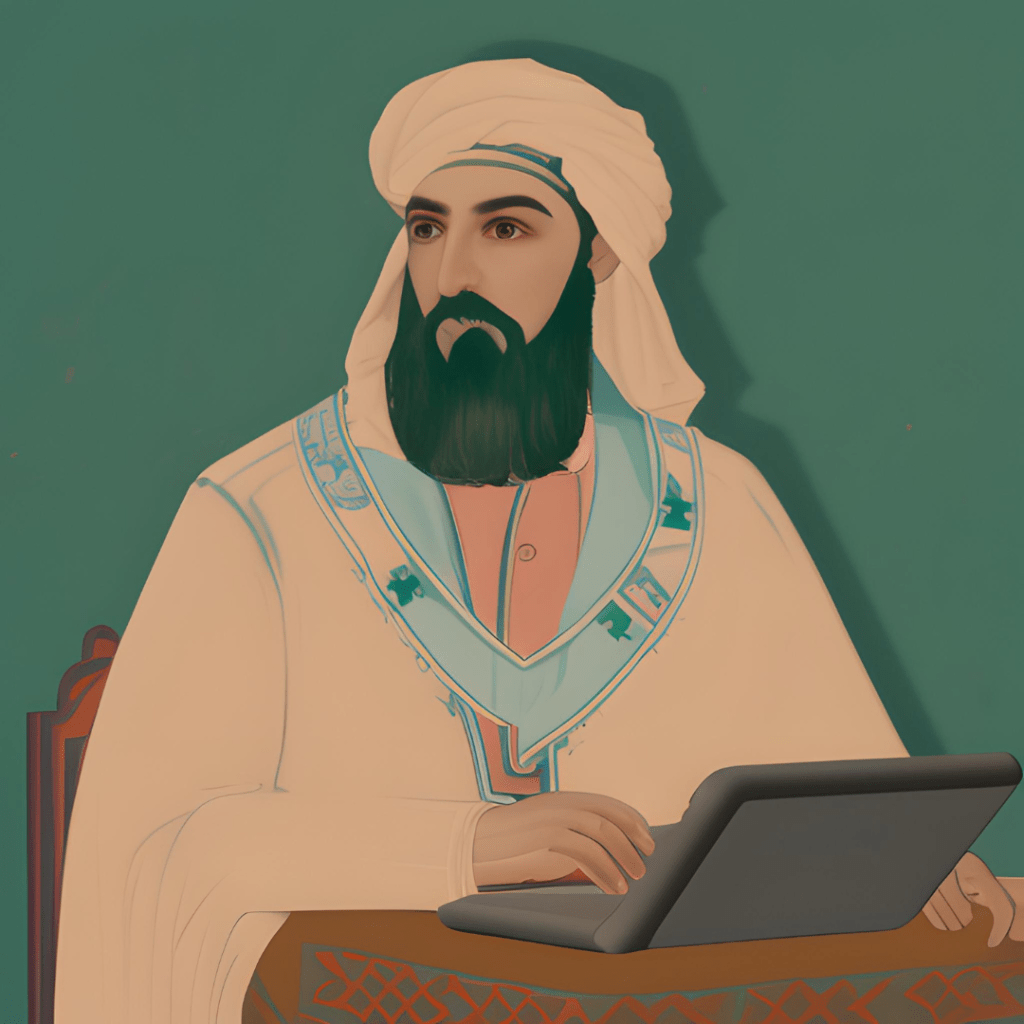

Have a look at the above list of references, especially if you are an academic in Middle Eastern studies or any other related field. Do you notice anything suspicious about them? Unless you are a specialist on Emir Abdelkader (also written as Abd al-Qadir al-Jaza’iri, a nineteenth-century Algerian anticolonial hero), they would all probably seem legit to you. Only they aren’t! These are all made-up references by ChatGPT, but admittedly, or perhaps annoyingly or even alarmingly, very well made-up.

Continue reading →